By Robert R Glaser, UX Architect

By Robert R Glaser, UX Architect

I make mistakes. Some of my greatest successes, in fact, most of them, all came from lessons learned. It would almost seem that any success that didn’t come from failure came from luck. For failure taught me through experience what would happen in very concrete terms, when I failed.

We are at a deluge of “Top 10 lists”. This list worked out to match that 10 through coincidence and not by intent. I tent to loathe the common facile top ten list as clickbait or worse a set of ostensible and rudimentary roles that should have been learned in the “101” level of any area of study, much less expertise. Sometimes 10 is too many, and far too often, it’s not enough. I think the thing that irks me about this trend is the implication that expertise can come from reading these lists. Notwithstanding this list below. It is a recipe approach to problem-solving. In this case, in UX. This recipe approach provides answers to specific problems but never with enough background to really understand whether the solution your about to apply will actually solve the problem or do so without causing other cascading problems.

So I thought I’d make a list of common problems caused by “instant expertise” solutions of these oversimplifications and maybe provide a way of avoiding them by providing some direction rather than solutions.

I intentionally didn’t put illustrations here, since this article is meant to be thoughtful and to let you think of your own examples. Often when pictures are provided, people tend to draw conclusions too quickly and without a thorough understanding of the ideas.

1. Anything that can be distilled to a top ten list, is cursory at best.

The internet is bursting with life hacks, memes and other suggestions that seem so amazing that anyone would have even thought of it. At the same time, so many of these suggestions for easier, faster, cheaper, better, solutions are often none of those epithets (or maybe just one.)

It gets worse when you start focusing on a particular field of work like UX (but by no means limited to it.) In these we are barraged with rules of thumb that are overgeneralized, or too specific, or highly limited, or so obvious that if you have to be told, you are in the wrong area of work or study.

Would you go to a Dr. who refreshed their knowledge of current medical practices by looking at a top ten list of missed symptoms of a stroke? These lists are actually and commonly recommended as an approach to successful articles and blogs. I rarely ever come across a list where I didn’t find myself thinking after reading only one or two of the list items and thinking “except when… ” followed by countless examples. This is not necessarily the often overly simple statements but rather the often-intractable completeness of them. This is often accomplished by the prefixes of “Always…” or “Never…” or some similar authoritarian language. By the way, this list is no exception, which is why I worded the 1st title as I did. The items here are meant to create awareness and begin dialogues, not provide exact rules in advanced UX design.

2. Check your facts.

I see so many lists with factual sounding statements (often by using technical jargon) which often don’t even make sense. I would expect anyone reading this blog to be just as skeptical, and you should be.

I read an article about color use recently that popped up in my email by one of those sites that regularly publish articles about different aspects of design. As I began reading, I noticed this string of statements.

Firstly, we need a shared language of color terms and definitions. This handy list is from our quick guide to choosing a color palette.

The vocabulary of color

Hue: what color something is, like blue or red

Chroma: how pure a color is; the lack of white, black or gray added to it

Saturation: the strength or weakness of a color

Value: how light or dark a color is

Tone: created by adding gray to a pure hue

Shade: created by adding black to a pure hue

Tint: created by adding white to a hue

What bothered me was the arbitrary mix of subtractive color models (typically the RYB or CMYK associated with physical pigments and dyes.) and additive color models (typically the RGB model associated with light and digital displays) terminology which shouldn’t be mixed, particularly when its preceded by the line:

Firstly, we need a shared language of color terms and definitions.

This article in itself referenced another article which used a standard I’d never heard of as a painter nor had I read it anywhere “Painters Color Mixing Terminology” implying this was a standard taxonomy. I have heard many of the terms, but never in such a structured and almost arbitrary way. One can simply use photoshop to see that the use of these seemingly defined terms, fall apart when one assumes that they mean something concrete with clear and consistent results. Additionally, the terms subtractive colors and additive colors in the function of an everyday designer, which are critical to design work which may appear in print and on the web where each is significant and understanding those differences is essential to the practical and technical aspects of implementing any quality aesthetic design.

Additionally, the referring article also included this quote as important information:

What colors mean

Red: energy, power, passion

Orange: joy, enthusiasm, creativity

Yellow: happiness, intellect, energy

Green: ambition, growth, freshness, safety

Blue: tranquility, confidence, intelligence

Purple: luxury, ambition, creativity

Black: power, elegance, mystery

White: cleanliness, purity, perfection

Any good UX designer would throw these out the window. What any color means changes wildly depending on what the context is, what the contemporary zeitgeist is, or the culture of the demographic that it is aimed at. For example, culturally, in the US red often indicates warning or danger, whereas in Japan it indicates happiness. But still, these are dependent to greater or lesser degrees on context. Not every international appearing design is as internationally accepted as the frequency of its use.

These examples are just based on one article about color alone. Other examples are myriad.

While I see and peruse a lot of UX sites, I have very few sites I read with regularity. These are the ones I trust. I’m not mentioning them because you as a reader and (I assume some of you are UX designers) should be selecting sites with some real critical thinking. I have a library of real books I can peruse as well as a wealth of online peer-reviewed literature. But I always let a healthy amount of skepticism be my guide. Even Wikipedia can be good for some foundational material as long as you check resources that may seem to be too good to be true to make sure you’re not unconsciously p-hacking yourself. Always try to prove yourself wrong, not right. PRoving yourself right is far easier and more difficult to refute misleading results due to ego.

Steve Allen, the late comic, composer, and writer, wrote in his book “Dumbth!” that lack of critical thinking, particularly in the case of taking experts assessments, reviews, and endorsements as fact. This lack of discernment is a common weak link in the chain of thought where rationalizing or biasing the opinion into fact has become a problem.

I welcome different perspectives and I’m sure that the statements I’ve made here are equally subject to them.

3. UX Design is an empirical science, psychology, and art.

I am almost pummeled with job offers for UX designers where rarely is the science of UX mentioned in anything more than a vague or oversimplified reference to user testing. Sometimes they will add more of the traditional jargon like wireframes (so often misused or misrepresented) and prototyping. What is rarely mentioned and is a red flag is that any of these UX science aspects of design are rarely indicated with any implication of support (financial or personnel). It’s as if all these tasks can easily and just as effectively be replaced by heuristics (often mine alone) and the use of “common sense” which is often the foundation in UX research for “false-consensus effect“. This is often poorly used as a cost-saving and time-saving measure. I can use my experience and good heuristic testing practices as a triage for user testing, but it is a poor substitute.

If there is a lot of language in there regarding the importance/significance of creating visual design assets, design guides, or other more visual aspects of design, then I’ll usually pass or ask if they are really looking for a visual designer with some UX background – which is basically a visual designer.

Conversely, if they require a lot of coding knowledge, front-end development, CSS, javascript, etc., then I’ll usually pass or ask if they are really looking for a developer.

The reason isn’t that I can’t do visual design (it was my original study in college) or that I can’t code (I actually went back to college to learn programming) but its because I want to design UX architecture. I don’t want to be a visual designer or software engineer. I like that I have enough knowledge and experience in these areas to be able to address UX in a way that I know can be implemented and if someone says “how can that be implemented?”, I can explain, in detail and with technical, specific references. These two skills are important skills, but when I’m writing code or deigning icon families, I’m not doing UX design.

4. UX Design should use the scientific method.

If you want to do an effective job at testing an interaction, test how it fails. Testing for success is both easier to do since it requires a far less rigorous approach, but it is also easier to cheat and bias the results even without malicious intent. I’m not referring to grand p-hacking news stories (although relevant) but rather the more subtle types of formative testing for the simplest of tasks.

I should also point out that the importance of appearance, while highly influenced by emotion and experience, can be quite variable, it is nonetheless measurable and should always be taken into account. This is where the assessment of quantitative results and qualitative results can reveal some surprising information.

5. Visual design is far less important unless your product is a commodity.

Generally, products either have a unique differentiator (something no one else has literally), or, a product’s differentiator could be not unique, but significantly better (faster, more accurate, more complete, simpler, cheaper) than a competitor. In these first two cases, visual design (if it’s not an inherent function of the product) is one of the last things to be considered in its design. This is because a differentiator needs to be both introduced and made to appear intuitive (even if it really isn’t). This is done through UX architecture such as user research, interactive design, formative iterative testing, and any other areas of ideation that may be available depending on the resources (primarily people) available.

Before the visual design is even addressed (again, excluding things that require certain visual requirements like standardized colors for stop/go, start/stop, warning/failure. Even these though should remain as words for as long as possible.

The reason for this is that visual design biases early iterative testing into creating artificial mental models that become improperly reinforced.

But if the product is at the commodity level such as a website for a store of any type, then the approach changes. Here visual design can begin far earlier but concurrently with the interactive design. In the early stages here, branding concepts such as palettes, layout schemas, font and assets standards, and designs can be explored in a componentized and scalable manner so that when the visual elements are then integrated with the interactive elements. This approach works more harmoniously in a collaborative way.

6. Formal training in UX or visual design isn’t just a “nice-to-have”.

As someone who has had formal training (i.e. University, or some nationally accredited by a professional or governing body), been self-taught, and been taught on the job, each has its advantages. One of the greatest advantages of formal training is the importance of the reduction of ego in the design process. This isn’t just so that the designer can handle criticism, but welcome it, learn from it and use it with equal constructive use on colleagues and reports. Early on in my career, I was occasionally suspicious if I didn’t get some criticism on any submission. It usually meant it was viewed in a cursory manner. While sometimes I would be told that my work was of a quality that didn’t require constant supervision (a wonderful thing to hear) it also quickly taught me that I had to improve my own self reviewing process which I still do to this day.

I have learned many things through self-taught study, both informally through training books and online courses and personal experiments, it still lacks the 2nd and 3rd party interactive review throughout the process. Learning on the job can do a far better job at this although, the learning may be less consistent due to meeting your job requirements and deadlines.

I particularly enjoy mentoring whenever I can. I used to say “There are no stupid questions.” But now there is a caveat: “It isn’t stupid until you ask the same question over again.” I realize that someone may not fully understand something without realizing it, but there is also a point where it is up to the learner to ask more follow up questions until they understand why something is being done and not simply that it has to be done.

7. Context is everything.

The context of a user experience sometimes seems so obvious that it needn’t be recorded or enumerated. This is often an error. In formal or complex systems, it’s essential, but in informal or common situations, it should still be addressed, even if informally, so long as it is recorded somewhere in the process. The reason for this is that we often overlook obvious things because they are often automatic in their execution, autonomically prefiltered sensory input such as background noise (audio, visual, or cognitive) and so on.

Two instances where I found this to have been a critical issue that was addressed far too late were:

- Designing (actually just updating) the UX for a cars infotainment system. Just looking at the data of mental models for media players on phones and in cars gave a rough idea. Additionally, there were the companies differentiating features, not the least of which was A.I. and machine learning in the media selection process. As a proxy, tablets were used and even in-dash prototypes would be used. All of these pieces of information were very helpful, but a flawed contextual assumption was testing without driving. While driver distraction was addressed in the assumptive paradigms, they were not tested in real-life situations. This required some significant changes to the design recommendations that were counter to the product requirements due to the simplest of use cases.

- When designing for an enterprise radiology workflow, I was aware that the majority of enterprise radiologists worked in dark rooms and so this was taken into account in the design paradigm. However, when simply sitting and watching a variety of radiologists with different areas of specialization work in these dark rooms, it became apparent that differentiators in screen data could not only be reduced but had to be like the current versions that these radiologists were using was clearly distracting in a way that affected their attitudes while doing their diagnostic work. While this change was not asked for by users or listed by product management, once implemented, the response was overwhelmingly positive, with no negative responses.

Each of these issues was addressed but later in the development cycle where they required resources and time were far higher than they would have been had these issues been noted properly earlier on. Incidentally, these two specifics were not the only ones but were two that could be fairly briefly described.

8. Flexible and scalable design or quick design.

Most people have heard the old saying “Better, faster, and cheaper: pick two.” This is similar in UX design although simplified into two choices rather than three. You can’t do both and you need to understand that each has its advantages and disadvantages. A design that is flexible and scalable requires much more time at the beginning since a fairly detailed understanding of the user and system ecosystem are necessary to design in this way. This deeper understanding of the system allows a more componentized approach to UX design since you can reduce the frequency and increase the speed of validation in the formative states. Additionally, it can facilitate more scalable and agile engineering development once the designs reach that phase of development. Also, way down the road when you get to more summative testing, adaptation is easier and often allows faster decision making when there are important benchmarks and deadlines. This slower beginning though often requires a somewhat defensive stance since there’s often not a lot to show soon. I should note that flexibility in design and scalability in design, while different, can be fairly easily designed concurrently with little time or resource reduction if only one is addressed since the same resources and many of the same design considerations are used for both.

The quick design approach allows MVP to get to market or at least shareholders and sales far quicker. This approach of being fast and dazzling can be a boon to a startup with no product yet in the market. There is something very compelling about having a working product as opposed to a prototype in hand. It doesn’t show what its supposed to do but rather what it does do. The big drawbacks come when changes or a new version needs to be made. Hardcoding of applications require major rework if not rebuilding the base code from scratch. Additionally, from the purely UX point, fast design, while providing a UX solution for that specific product (even if it’s the first design) is likely to create both expectations and mental models which are incompatible with new or different features and can cause user fatigue, user conversion issues, inconsistent user expectations cause by the earlier mental model, leading to frustration even though the newer/replacement/update product is better in terms of quality, features, and reliability.

Jared Spool wrote an excellent and more expensive article a few years ago on this called Experience Rot.

9. Don’t espouse good practices and then not follow them, or worse punish those who do.

So often there are things I’ve heard over and over in various companies. These are often well known (both because of popular repetition and the truth behind them.) The biggest cause of this problem is either or both fear and ego.

- “Don’t be afraid to fail.“ I’ve witnessed numerous punishments and more than a few firings due to many genuinely innovative initiatives that fell short even though the reasoning for failure may have been insufficient development time, unknown variables, and even while being successful, being considered a failure against the exact letter of the original hypothesis. In many of these cases, there was significant and usable invention and innovation that often was utilized later. These failures were punished because someone in a decision-making position either felt threatened, or they would cancel a program due to fear of failure rather than the potential for success since job loss and sometimes fragile egos are bigger than accolades and ROI.

- Experience is essential and then placing hierarchy over experience. In an ideal, in fact, in many regular businesses, good leadership will hire experts to advise and help the company succeed. Often, though, a long relationship with an industry does not always mean a thorough understanding of it. Knowing the politics of an industry, never equates to knowing the technology of it. Both are separate domains of information and like many areas of knowledge, each requires (1. study, (2. practice, (3. many failures and (4. enough successes to address a luck factor, for genuine expertise. I know enough about corporate politics to realize that I want to only be involved when necessary and no more than that. But I also know that someone’s experience in the political aspects of a product or project doesn’t outweigh the technical/production side of it.

- End meetings without a confirmed and mutually understood consensus. I have been to so many meetings where not only nothing was decided, but virtually everyone left believing that there was a decision and whatever they thought that was is what they are going to be acting on. Even a meeting where nothing is decided or resolved is ok as long as everyone leaves with that understanding. There is plenty of good basic meeting best practices out there. My point is to simply follow them.

- We have a long-term plan but then you realize that it can change week to week or even day to day based on Knee jerk reactions at the decision-making level, so often that more resources are depleted to spinning up and spinning down rather than to actually producing anything. I of reference this in a paradigm I refer to as the “Sitcom logic approach.” This is where an idea to do something is presented and on its first (and only) run, fails hysterically (it is a sitcom and fixing it wouldn’t be funny.) Of course, what then happens is that the idea is abandoned for something else. No one tries to figure out what went wrong and whether it is fixable. Often these failure points are more likely to be minor missed considerations rather than catastrophic conceptual errors.

- “No.” and “I don’t know?” are negative only if viewed from an ego standpoint. Dismissing or shutting someone down for these statements is in the first case (“No”), dismissing their experience and knowledge beyond what you may know. The second “I don’t know” dismisses curiosity and an opportunity to learn and innovate.

Adam Grant has spoken and written about the successfulness of those whose careful use of “No” has improved their productivity, businesses, products, and more. I highly recommend Adams books and videos. So if you follow through on that, I needn’t simply repeat it.

As for the “I don’t know” in the design world, ego relegates this to lack of intelligence and inexperience when the opposite is generally true. Interestingly, it is the primary door to both knowledge and experience. First, because, when you say it, you are responding to a question or situation to which you have know answer or response. This provides you with exactly the subjects or concepts you need to learn about. While learning about those subjects, you have the opportunity to relate them to your own life experiences, often as they are happening around you. This second part provides the first form of experience, and that is basic observation. The second part of experience come from when you decide to put the newly learned concepts and ideas into practice to see where and how they succeed and fail.

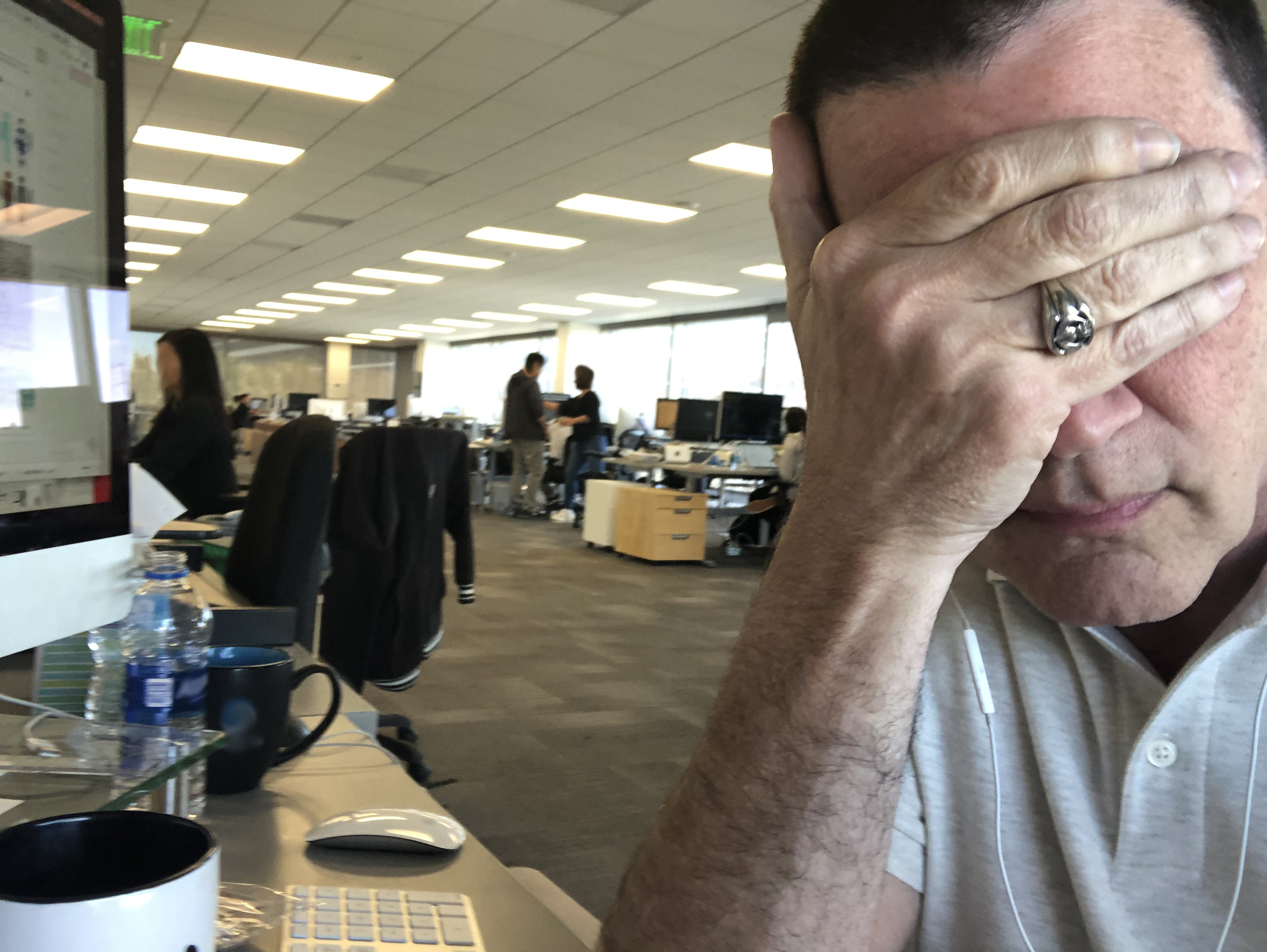

- “Standards” that are really just “Trends”. There are so many examples of this that I don’t think it would be too difficult to compile a top 100 trends that became standards but still went away as trends do just more slowly because far more money and time was invested in it. I’m just going to use one example: the open office environment. As someone who has been around worked in office building with actual offices to “office” buildings which look like well decorated warehouses with modern desks. I began seeing the first long-term studies almost 20 years ago referencing the actual inefficacy of the environment. Not surprisingly studies continue to reveal the same thing.

- They are intended to foster collaboration – but they reduce it

- They are meant to create a more social environment – but they increase the need for privacy beyond what would be normal privacy expectations.

- They increase distraction

- They reduce productivity

- They increase offsite work even when that’s not the desired effect.

The part I find amusing is that most of the adaptations to the problems of the open office environment are symptomatic cures and don’t address the actual problem. Things like privacy rooms, quiet areas or comfort areas, gaming areas.

10. The UX unicorn problem.

I am a UX architect who started out as a graphic designer (because that what we were called back then.) I was an editorial art director in medical publishing, I designed advertising for everything from food courts to couture retail. Then I got a job at Xerox. That began my journey into what was to become UX, through early (and unbelievably expensive computer graphics and animation), years of instructional design and early computer-based training, and so I went back to college to learn programming. This was useful since it taught me two things, 1st: how to design and write code. 2nd: that I didn’t want to be a programmer, but it was incredibly useful to understand what was going on in code and how to engage with software engineers. I then spent time developing programs and learning about the complexities between how people interact with machines, computers and sometimes simply objects. I got work with a lot of testing environments from summative testing in highly controlled labs with eye tracking equipment, to simple formative testing with both quantitative and qualitative results as needed. I did a stint at Gartner designing data visualizations for their top consultants for their world symposia, and designed UX VOIP systems (for regular users to administrators) for what used to be ShoreTel (now part of Mitel). I’ve designed radiology enterprise systems (PACS) and Voice controlled and enabled vehicle infotainment systems.

With all that, I find the Unicorn designation to be problematic. While I can do a lot of things because I’ve had a broad experience, I would rather apply all that experience to creating really elegant and effective UX. This doesn’t mean something that is spectacular, because that’s really more about visual design and if the process for the user is not about the result of what they want to accomplish. It doesn’t mean something that’s really interesting interaction experience since that applies to game design more than common UX. I have often said and will continue to say, if my work is noticed by the user, I have failed. This goes well beyond the rudimentary expression “If the UI needs to be explained the UX has failed.”

Here is where the Socratic quote, “The more you know, the more you realize you don’t know.” Becomes so apparent. This is important here because while the unicorn UXer seems to be able to do so many things. It means also that for all the time that they are doing user research, they are not doing strategic design. For all the time that they are organizing, running, and analyzing user tests, they are not designing wireframes. All the time they are creating visual assets, they are not establishing a design language with its documentation. All the time that they are managing tactical aspects of implementation, is time better spent on establishing the standards of Human Interaction Guidelines. Software development gets distributed and delegated but there is so often an expectation that for no good reason that a UX designer can do everything concurrently.

For all the boasting of the UX being of paramount importance to many companies, so many invest precious few resources to it nor understand its complexity and process. So when I see a company is looking for a UX designer who’s a unicorn, its typically going to be either an underpaid position which will either set them up for scapegoating or burn out the employee in no time. On the other hand, it may be more likely that they are hiring for a position for which they do not really understand the importance of the needs of the user, all the while being certain that they “Just know because it’s common sense.” This dangerously and incorrectly commodifies UX design work, and worse than that, almost forces mediocre work. It removes the ability of the UX designer to design an elegant interaction and forces them into a high-speed triage situation. These situations do happen in the best of circumstances and having a solid grounding in formal training and many years of experience increases the likelihood that the quick solution will be a good one. It is, however, a bad approach to design and development.

In summary

While I’m often surprised at the amazing outputs which were based on the luck of an early idea being successful, I’m far more impressed when the success of the outcome is from diligent well thought out work since this kind of work will far more likely lead to further successful improvements in the future.

By Robert R Glaser, UX Architect

By Robert R Glaser, UX Architect